Export data to Spanner (reverse ETL)

This document describes how you can set up a reverse extract, transform, and

load (reverse ETL) workflow from BigQuery to Spanner. You can

do this by using the

EXPORT DATA statement

to export data from BigQuery data sources, including

Iceberg tables, to a

Spanner table.

This reverse ETL workflow combines analytic capabilities in BigQuery with low latency and high throughput in Spanner. This workflow lets you serve data to application users without exhausting quotas and limits on BigQuery.

Before you begin

Create a Spanner database including a table to receive the exported data.

Grant Identity and Access Management (IAM) roles that give users the necessary permissions to perform each task in this document.

Create an Enterprise or a higher tier reservation. You might reduce BigQuery compute costs when you run one-time exports to Spanner by setting a baseline slot capacity of zero and enabling autoscaling.

Required roles

To get the permissions that you need to export BigQuery data to Spanner, ask your administrator to grant you the following IAM roles on your project:

-

Export data from a BigQuery table:

BigQuery Data Viewer (

roles/bigquery.dataViewer) -

Run an extract job:

BigQuery User (

roles/bigquery.user) -

Check parameters of the Spanner instance:

Cloud Spanner Viewer (

roles/spanner.viewer) -

Write data to a Spanner table:

Cloud Spanner Database User (

roles/spanner.databaseUser)

For more information about granting roles, see Manage access to projects, folders, and organizations.

You might also be able to get the required permissions through custom roles or other predefined roles.

Limitations

This feature is not supported in Assured Workloads.

The following BigQuery data types don't have equivalents in Spanner and are not supported:

| Spanner database dialect | Unsupported BigQuery types |

|---|---|

| All dialects |

|

| GoogleSQL |

|

The maximum size of an exported row cannot exceed 1 MiB.

Spanner enforces referential integrity during the export. If the target table is a child of another table (INTERLEAVE IN PARENT), or if the target table has foreign key constraints, the foreign keys and parent key will be validated during the export. If an exported row is written to a table with INTERLEAVE IN PARENT and the parent row doesn't exist, the export will fail with "Parent row is missing. Row cannot be written" error. If the exported row is written to a table with foreign key constraints and is referencing a key that doesn't exist, the export will fail with "Foreign key constraint is violated" error. When exporting to multiple tables, we recommend sequencing the export to ensure that referential integrity will be maintained through the export. This usually means exporting parent tables and tables that are referenced by foreign keys before tables that reference them.

If the table that is the target of the export has foreign key constraints, or is a child of another table (INTERLEAVE IN PARENT), the parent table must be populated before a child table export, and should contain all the corresponding keys. An attempt to export a child table while a parent table does not have the complete set of relevant keys will fail.

A BigQuery job, such as an extract job to Spanner, has a maximum duration of 6 hours. For information about optimizing large extract jobs, see Export optimization. Alternatively, consider splitting the input into individual blocks of data, which may be exported as individual extract jobs.

Exports to Spanner are only supported for the BigQuery Enterprise or Enterprise Plus editions. The BigQuery Standard edition and on-demand compute are not supported.

You cannot use continuous queries to export to Spanner tables with auto-generated primary keys.

You cannot use continuous queries to export to Spanner tables in a PostgreSQL-dialect database.

When using continuous queries to export to a Spanner table, ensure that you choose a primary key that doesn't correspond to a monotonically increasing integer in your BigQuery table. Doing so might cause performance issues in your export. For information about primary keys in Spanner, and ways to mitigate these performance issues, see Choose a primary key.

Configure exports with spanner_options option

You can use the spanner_options option to specify a destination

Spanner database and table. The configuration is expressed in

the form of a JSON string, as the following example shows:

EXPORT DATA OPTIONS( uri="https://spanner.googleapis.com/projects/PROJECT_ID/instances/INSTANCE_ID/databases/DATABASE_ID", format='CLOUD_SPANNER', spanner_options = """{ "table": "TABLE_NAME", "change_timestamp_column": "CHANGE_TIMESTAMP", "priority": "PRIORITY", "tag": "TAG", }""" )

Replace the following:

PROJECT_ID: the name of your Google Cloud project.INSTANCE_ID: the name of your database instance.DATABASE_ID: the name of your database.TABLE_NAME: the name of an existing destination table.CHANGE_TIMESTAMP: the name of theTIMESTAMPtype column in the destination Spanner table. This option is used during export to track the timestamp of the most recent row update. When this option is specified, the export first performs a read of the row in the Spanner table, to ensure that only the latest row update is written. We recommend specifying aTIMESTAMPtype column when you run a continuous export, where the ordering of changes to rows with the same primary key is important.PRIORITY(optional): priority of the write requests. Allowed values:LOW,MEDIUM,HIGH. Default value:MEDIUM.TAG(optional): request tag to help identify exporter traffic in Spanner monitoring. Default value:bq_export.

Export query requirements

To export query results to Spanner, the results must meet the following requirements:

- All columns in the result set must exist in the destination table, and their types must match or be convertible.

- The result set must contain all

NOT NULLcolumns for the destination table. - Column values must not exceed Spanner data size limits within tables.

- Any unsupported column types must be converted to one of the supported types before exporting to Spanner.

Type conversions

For ease of use, Spanner exporter automatically applies the following type conversions:

| BigQuery type | Spanner type |

|---|---|

| BIGNUMERIC | NUMERIC (PostgreSQL dialect only) |

| FLOAT64 | FLOAT32 |

| BYTES | PROTO |

| INT64 | ENUM |

Export data

You can use the

EXPORT DATA statement

to export data from a BigQuery table into a

Spanner table.

The following example exports selected fields from a table that's named

mydataset.table1:

EXPORT DATA OPTIONS ( uri="https://spanner.googleapis.com/projects/PROJECT_ID/instances/INSTANCE_ID/databases/DATABASE_ID", format='CLOUD_SPANNER', spanner_options="""{ "table": "TABLE_NAME" }""" ) AS SELECT * FROM mydataset.table1;

Replace the following:

PROJECT_ID: the name of your Google Cloud projectINSTANCE_ID: the name of your database instanceDATABASE_ID: the name of your databaseTABLE_NAME: the name of an existing destination table

Export multiple results with the same rowkey value

When you export a result containing multiple rows with the same rowkey value,

values written to Spanner end up in the same

Spanner row. Only single matching BigQuery row

(there is no guarantee which one) will be present in the Spanner

row set produced by export.

Export using a CLOUD_RESOURCE Connection

You can delegate write permissions to a BigQuery CLOUD_RESOURCE connection to

run exports without giving a user access direct access to the Spanner database.

Before you export to Spanner with a CLOUD_RESOURCE connection, do the following:

Create a connection

You can create or use an existing

CLOUD_RESOURCE connection

to connect to Spanner.

Console

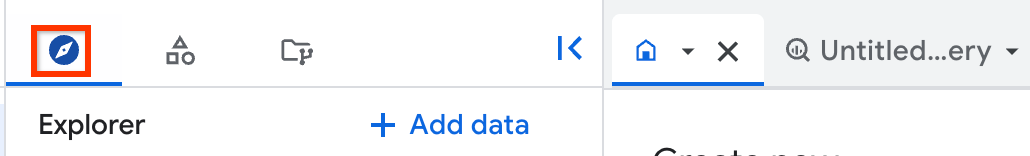

Go to the BigQuery page.

In the left pane, click Explorer:

If you don't see the left pane, click Expand left pane to open the pane.

In the Explorer pane, expand your project name, and then click Connections.

On the Connections page, click Create connection.

For Connection type, choose Vertex AI remote models, remote functions, BigLake and Spanner (Cloud Resource).

In the Connection ID field, enter a name for your connection.

For Location type, select a location for your connection. The connection should be colocated with your other resources such as datasets.

Click Create connection.

Click Go to connection.

In the Connection info pane, copy the service account ID for use in a later step.

SQL

Use the CREATE CONNECTION statement:

In the Google Cloud console, go to the BigQuery page.

In the query editor, enter the following statement:

CREATE CONNECTION [IF NOT EXISTS] `CONNECTION_NAME` OPTIONS ( connection_type = "CLOUD_RESOURCE", friendly_name = "FRIENDLY_NAME", description = "DESCRIPTION" );

Replace the following:

-

CONNECTION_NAME: the name of the connection in either thePROJECT_ID.LOCATION.CONNECTION_ID,LOCATION.CONNECTION_ID, orCONNECTION_IDformat. If the project or location are omitted, then they are inferred from the project and location where the statement is run. -

FRIENDLY_NAME(optional): a descriptive name for the connection. -

DESCRIPTION(optional): a description of the connection.

-

Click Run.

For more information about how to run queries, see Run an interactive query.

bq

In a command-line environment, create a connection:

bq mk --connection --location=REGION --project_id=PROJECT_ID \ --connection_type=CLOUD_RESOURCE CONNECTION_ID

The

--project_idparameter overrides the default project.Replace the following:

REGION: your connection regionPROJECT_ID: your Google Cloud project IDCONNECTION_ID: an ID for your connection

When you create a connection resource, BigQuery creates a unique system service account and associates it with the connection.

Troubleshooting: If you get the following connection error, update the Google Cloud SDK:

Flags parsing error: flag --connection_type=CLOUD_RESOURCE: value should be one of...

Retrieve and copy the service account ID for use in a later step:

bq show --connection PROJECT_ID.REGION.CONNECTION_ID

The output is similar to the following:

name properties 1234.REGION.CONNECTION_ID {"serviceAccountId": "connection-1234-9u56h9@gcp-sa-bigquery-condel.iam.gserviceaccount.com"}

Python

Before trying this sample, follow the Python setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Python API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Node.js

Before trying this sample, follow the Node.js setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Node.js API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Terraform

Use the

google_bigquery_connection

resource.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

The following example creates a Cloud resource connection named

my_cloud_resource_connection in the US region:

To apply your Terraform configuration in a Google Cloud project, complete the steps in the following sections.

Prepare Cloud Shell

- Launch Cloud Shell.

-

Set the default Google Cloud project where you want to apply your Terraform configurations.

You only need to run this command once per project, and you can run it in any directory.

export GOOGLE_CLOUD_PROJECT=PROJECT_ID

Environment variables are overridden if you set explicit values in the Terraform configuration file.

Prepare the directory

Each Terraform configuration file must have its own directory (also called a root module).

-

In Cloud Shell, create a directory and a new

file within that directory. The filename must have the

.tfextension—for examplemain.tf. In this tutorial, the file is referred to asmain.tf.mkdir DIRECTORY && cd DIRECTORY && touch main.tf

-

If you are following a tutorial, you can copy the sample code in each section or step.

Copy the sample code into the newly created

main.tf.Optionally, copy the code from GitHub. This is recommended when the Terraform snippet is part of an end-to-end solution.

- Review and modify the sample parameters to apply to your environment.

- Save your changes.

-

Initialize Terraform. You only need to do this once per directory.

terraform init

Optionally, to use the latest Google provider version, include the

-upgradeoption:terraform init -upgrade

Apply the changes

-

Review the configuration and verify that the resources that Terraform is going to create or

update match your expectations:

terraform plan

Make corrections to the configuration as necessary.

-

Apply the Terraform configuration by running the following command and entering

yesat the prompt:terraform apply

Wait until Terraform displays the "Apply complete!" message.

- Open your Google Cloud project to view the results. In the Google Cloud console, navigate to your resources in the UI to make sure that Terraform has created or updated them.

After you create the connection, open it. In the Connection info pane, copy the service account ID. You will need this ID when you configure permissions for the connection. When you create a connection resource, BigQuery creates a unique system service account and associates it with the connection.

Set up access

You must give the service account that is associated with the new connection write access to your

Spanner instance or database. We recommend that you use the

Cloud Spanner Database User (roles/spanner.databaseUser) predefined IAM role.

These steps require the service account ID that you copied when you created your connection.

To grant access to database-level roles for the service account, do the following:

Go to the Spanner instances page.

Click the name of the instance that contains your database.

In the Overview tab, select the checkbox for your database.

The Info panel dialog appears. Click Add principal.

For New principals, enter the service account ID that you copied earlier.

In the Select a role field, select a role with

spanner.databases.writepermissions. We recommend that you use the Cloud Spanner Database User role.Click Save.

Run the export using the CLOUD_RESOURCE connection

With the connection created and the appropriate access granted to it, you can run the export using the CLOUD_RESOURCE connection. The following example shows an EXPORT command that exports with a CLOUD_RESOURCE connection.

EXPORT DATA WITH CONNECTION `PROJECT_ID.LOCATION.CONNECTION_NAME` OPTIONS ( uri="https://spanner.googleapis.com/projects/PROJECT_ID/instances/INSTANCE_ID/databases/DATABASE_ID", format='CLOUD_SPANNER', spanner_options="""{ "table": "SPANNER_TABLE_NAME" }""" ) AS SELECT * FROM my_bq_dataset.table1;

Replace the following:

PROJECT_ID: the name of your Google Cloud project.LOCATION: the location where you created the connection—for example,us.CONNECTION_NAME: the name of the connection being used to run the export—for example,myconnection.INSTANCE_ID: the name of your Spanner database instance.DATABASE_ID: the name of your Spanner database.SPANNER_TABLE_NAME: The name of the existing destination Spanner table.

Export continuously

To continuously process an export query, see Create continuous queries for instructions and example code.

Export optimization

To optimize the export of records from BigQuery to Spanner, you can try the following:

Increase the number of nodes in the Spanner destination instance. During the early stages of the export, increasing the number of nodes in the instance might not immediately increase export throughput. A slight delay can occur while Spanner performs load-based splitting. With load-based splitting, the export throughput grows and stabilizes. Using the

EXPORT DATAstatement batches data to optimize writes to Spanner. For more information, see Performance overview.Specify

HIGHpriority withinspanner_options. If your Spanner instance has autoscaling enabled, settingHIGHpriority helps ensure that CPU utilization reaches the necessary threshold to trigger scaling. This allows the autoscaler to add compute resources in response to the export load, which can improve overall export throughput.The following example shows a Spanner export command set to

HIGHpriority:EXPORT DATA OPTIONS ( uri="https://spanner.googleapis.com/projects/

PROJECT_ID/instances/INSTANCE_ID/databases/DATABASE_ID", format='CLOUD_SPANNER', spanner_options="""{ "table": "TABLE_NAME", "priority": "LOW" }""" )Avoid ordering the query results. If the result set contains all primary key columns, the exporter automatically sorts the primary keys of the destination table to streamline writes and minimize contention.

If the destination table's primary key includes generated columns, add the generated columns' expressions to the query to ensure that the exported data is sorted and batched properly.

For example, in the following Spanner schema,

SaleYearandSaleMonthare generated columns that make up the beginning of the Spanner primary key:CREATE TABLE Sales ( SaleId STRING(36) NOT NULL, ProductId INT64 NOT NULL, SaleTimestamp TIMESTAMP NOT NULL, Amount FLOAT64, -- Generated columns SaleYear INT64 AS (EXTRACT(YEAR FROM SaleTimestamp)) STORED, SaleMonth INT64 AS (EXTRACT(MONTH FROM SaleTimestamp)) STORED, ) PRIMARY KEY (SaleYear, SaleMonth, SaleId);

When you export data from BigQuery to a Spanner table with generated columns used in the primary key, it is recommended, but not required, to include the expressions for these generated columns in your

EXPORT DATAquery. This lets BigQuery pre-sort the data correctly, which is critical for efficient batching and writing to Spanner. The values for the generated columns in theEXPORT DATAstatement aren't committed in Spanner, because they are auto-generated by Spanner, but they are used to optimize the export.The following example exports data to a Spanner

Salestable whose primary key uses generated columns. To optimize write performance, the query includesEXTRACTexpressions that match the generatedSaleYearandSaleMonthcolumns, letting BigQuery pre-sort the data before export:EXPORT DATA OPTIONS ( uri="https://spanner.googleapis.com/projects/

PROJECT_ID/instances/INSTANCE_ID/databases/DATABASE_ID", format='CLOUD_SPANNER', spanner_options="""{ "table": "Sales" }""" ) AS SELECT s.SaleId, s.ProductId, s.SaleTimestamp, s.Amount, -- Add expressions that match the generated columns in the Spanner PK EXTRACT(YEAR FROM s.SaleTimestamp) AS SaleYear, EXTRACT(MONTH FROM s.SaleTimestamp) AS SaleMonth FROM my_dataset.sales_export AS s;To prevent long running jobs, export data by partition. Shard your BigQuery data using a partition key, such as a timestamp in your query:

EXPORT DATA OPTIONS ( uri="https://spanner.googleapis.com/projects/

PROJECT_ID/instances/INSTANCE_ID/databases/DATABASE_ID", format='CLOUD_SPANNER', spanner_options="""{ "table": "TABLE_NAME", "priority": "MEDIUM" }""" ) AS SELECT * FROM 'mydataset.table1' d WHERE d.timestamp >= TIMESTAMP '2025-08-28T00:00:00Z' AND d.timestamp < TIMESTAMP '2025-08-29T00:00:00Z';This lets the query complete within the 6-hour job runtime. For more information about these limits, see the query job limits.

To improve data loading performance, drop the index in the Spanner table where data is imported. Then, recreate it after the import completes.

We recommend starting with one Spanner node (1000 processor units) and a minimal BigQuery slot reservation. For example, 100 slots, or 0 baseline slots with autoscaling. For exports under 100 GB, this configuration typically completes within the 6-hour job limit. For exports larger than 100 GB, increase throughput by scaling up Spanner nodes and BigQuery slot reservations, as needed. Throughput scales at approximately 5 MiB/s per node.

Pricing

When you export data to Spanner using the EXPORT DATA statement,

you are billed using

BigQuery capacity compute pricing.

To export continuously to Spanner using a continuous query, you

must have a

BigQuery Enterprise or Enterprise Plus edition

slot reservation and a reservation assignment

that uses the CONTINUOUS job type.

BigQuery exports to Spanner that cross regional boundaries are charged using data extraction rates. For more information, see BigQuery pricing. To avoid data transfer charges, make sure that your BigQuery export runs in the same region as the Spanner default leader.

After the data is exported, you're charged for storing the data in Spanner. For more information, see Spanner pricing.