本文說明如何部署 OpenTelemetry Collector、設定 Collector 以使用 otlphttp 匯出器和 Telemetry (OTLP) API,以及執行遙測產生器,將指標寫入 Cloud Monitoring。然後在 Cloud Monitoring 中查看這些指標。

如果您使用 Google Kubernetes Engine,可以按照「適用於 GKE 的代管式 OpenTelemetry」一文的說明操作,不必手動部署及設定使用 Telemetry API 的 OpenTelemetry Collector。

如果您使用 SDK 將應用程式的指標直接傳送至 Telemetry API,請參閱「使用 SDK 從應用程式傳送指標」一文,瞭解其他資訊和範例。

您也可以搭配使用 OpenTelemetry Collector 和 Telemetry API,以及 OpenTelemetry 零程式碼檢測。詳情請參閱「使用 OpenTelemetry Java 零程式碼檢測功能」。

事前準備

本節說明如何設定環境,以部署及使用收集器。

選取或建立 Google Cloud 專案

選擇這個導覽的 Google Cloud 專案。如果您還沒有 Google Cloud 專案,請建立一個:

- 登入 Google Cloud 帳戶。如果您是 Google Cloud新手,歡迎 建立帳戶,親自評估產品在實際工作環境中的成效。新客戶還能獲得價值 $300 美元的免費抵免額,可用於執行、測試及部署工作負載。

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator role

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Verify that billing is enabled for your Google Cloud project.

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator role

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Verify that billing is enabled for your Google Cloud project.

安裝指令列工具

本文使用下列指令列工具:

gcloudkubectl

gcloud 和 kubectl 工具是 Google Cloud CLI 的一部分。如要瞭解如何安裝這些元件,請參閱「管理 Google Cloud CLI 元件」。如要查看已安裝的 gcloud CLI 元件,請執行下列指令:

gcloud components list

如要設定 gcloud CLI 以供使用,請執行下列指令:

gcloud auth login gcloud config set project PROJECT_ID

啟用 API

在Google Cloud 專案中啟用 Cloud Monitoring API 和 Telemetry API。請特別注意 Telemetry API telemetry.googleapis.com,您可能第一次遇到這個 API。

執行下列指令來啟用 API:

gcloud services enable monitoring.googleapis.com gcloud services enable telemetry.googleapis.com

建立叢集

建立 GKE 叢集。

執行下列指令,建立名為

otlp-test的 Google Kubernetes Engine 叢集:gcloud container clusters create-auto --location CLUSTER_LOCATION otlp-test --project PROJECT_ID叢集建立完成後,請執行下列指令來連線:

gcloud container clusters get-credentials otlp-test --region CLUSTER_LOCATION --project PROJECT_ID

授權 Kubernetes 服務帳戶

下列指令會將必要的 Identity and Access Management (IAM) 角色授予 Kubernetes 服務帳戶。這些指令假設您使用 Workload Identity Federation for GKE:

export PROJECT_NUMBER=$(gcloud projects describe PROJECT_ID --format="value(projectNumber)")

gcloud projects add-iam-policy-binding projects/PROJECT_ID \

--role=roles/logging.logWriter \

--member=principal://iam.googleapis.com/projects/$PROJECT_NUMBER/locations/global/workloadIdentityPools/PROJECT_ID.svc.id.goog/subject/ns/opentelemetry/sa/opentelemetry-collector \

--condition=None

gcloud projects add-iam-policy-binding projects/PROJECT_ID \

--role=roles/monitoring.metricWriter \

--member=principal://iam.googleapis.com/projects/$PROJECT_NUMBER/locations/global/workloadIdentityPools/PROJECT_ID.svc.id.goog/subject/ns/opentelemetry/sa/opentelemetry-collector \

--condition=None

gcloud projects add-iam-policy-binding projects/PROJECT_ID \

--role=roles/telemetry.tracesWriter \

--member=principal://iam.googleapis.com/projects/$PROJECT_NUMBER/locations/global/workloadIdentityPools/PROJECT_ID.svc.id.goog/subject/ns/opentelemetry/sa/opentelemetry-collector \

--condition=None

如果服務帳戶的格式不同,您可以使用 Google Cloud Managed Service for Prometheus 說明文件中的指令授權服務帳戶,並進行下列變更:

- 將服務帳戶名稱

gmp-test-sa替換為您的服務帳戶。 - 授予上一組指令中顯示的角色,而不只是

roles/monitoring.metricWriter角色。

部署 OpenTelemetry 收集器

複製下列 YAML 檔案,並將其放在名為 collector.yaml 的檔案中,即可建立收集器設定。您也可以在 GitHub 的 otlp-k8s-ingest 存放區中找到下列設定。

在副本中,請務必將 ${GOOGLE_CLOUD_PROJECT} 替換為您的專案 ID PROJECT_ID。

只有使用 OpenTelemetry Collector 0.140.0 以上版本時,才能使用 Prometheus 指標的 OTLP。

設定已部署的 OpenTelemetry 收集器

建立 Kubernetes 資源,設定收集器部署作業。

執行下列指令,建立

opentelemetry命名空間,並在該命名空間中建立收集器設定:kubectl create namespace opentelemetry kubectl create configmap collector-config -n opentelemetry --from-file=collector.yaml執行下列指令,使用 Kubernetes 資源設定收集器:

kubectl apply -f https://raw.githubusercontent.com/GoogleCloudPlatform/otlp-k8s-ingest/refs/heads/otlpmetric/k8s/base/2_rbac.yaml kubectl apply -f https://raw.githubusercontent.com/GoogleCloudPlatform/otlp-k8s-ingest/refs/heads/otlpmetric/k8s/base/3_service.yaml kubectl apply -f https://raw.githubusercontent.com/GoogleCloudPlatform/otlp-k8s-ingest/refs/heads/otlpmetric/k8s/base/4_deployment.yaml kubectl apply -f https://raw.githubusercontent.com/GoogleCloudPlatform/otlp-k8s-ingest/refs/heads/otlpmetric/k8s/base/5_hpa.yaml等待收集器 Pod 達到「執行中」狀態,並準備好 1/1 個容器。如果是首次部署工作負載,在 Autopilot 上大約需要三分鐘。如要檢查 Pod,請使用下列指令:

kubectl get po -n opentelemetry -w如要停止監控 Pod 狀態,請輸入 Ctrl-C 停止指令。

您也可以檢查收集器記錄,確保沒有明顯錯誤:

kubectl logs -n opentelemetry deployment/opentelemetry-collector

部署遙測資料產生器

你可以使用開放原始碼的 telemetrygen 工具測試設定。這個應用程式會產生遙測資料,並傳送至收集器。

如要在

opentelemetry-demo命名空間中部署telemetrygen應用程式,請執行下列指令:kubectl apply -f https://raw.githubusercontent.com/GoogleCloudPlatform/otlp-k8s-ingest/refs/heads/main/sample/app.yaml建立部署後,系統可能需要一段時間才能建立 Pod 並開始執行。如要檢查 Pod 的狀態,請執行下列指令:

kubectl get po -n opentelemetry-demo -w如要停止監控 Pod 狀態,請輸入 Ctrl-C 停止指令。

使用 Metrics Explorer 查詢指標

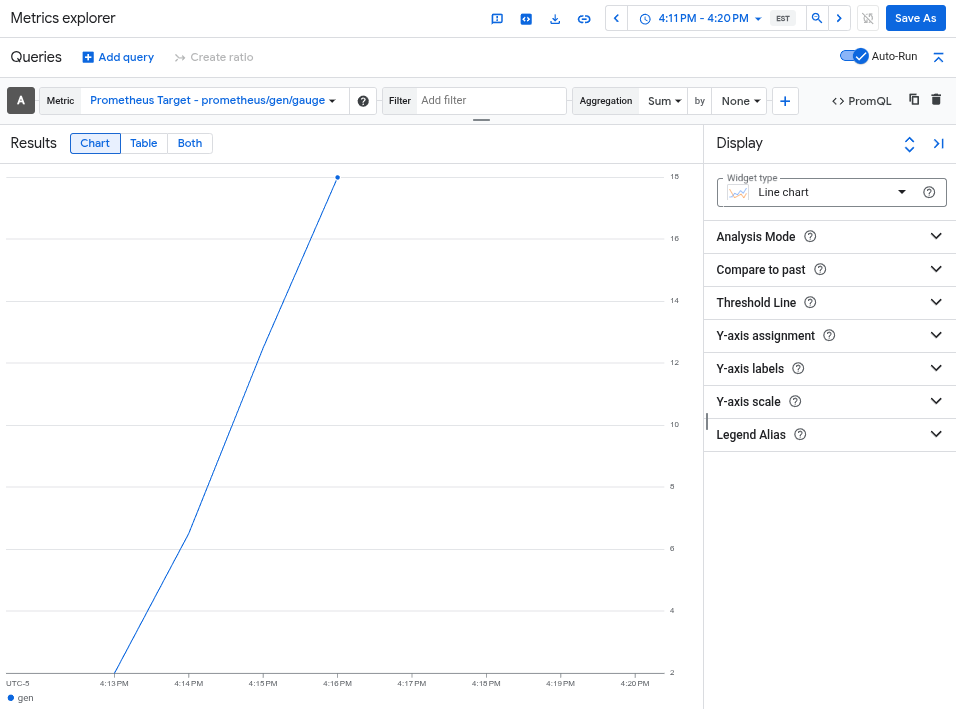

telemetrygen 工具會寫入名為 gen 的指標。您可以在 Metrics Explorer 的查詢產生器介面和 PromQL 查詢編輯器中,查詢這項指標。

前往 Google Cloud 控制台的 leaderboard「指標探索器」頁面:

如果您是使用搜尋列尋找這個頁面,請選取子標題為「Monitoring」的結果。

- 如果您使用 Metrics Explorer 查詢建構工具介面,指標的完整名稱為

prometheus.googleapis.com/gen/gauge。 - 如果您使用 PromQL 查詢編輯器,可以透過名稱

gen查詢指標。

下圖顯示 Metrics Explorer 中的 gen 指標圖表:

刪除叢集

查詢指標驗證部署作業後,即可刪除叢集。如要刪除叢集,請執行下列指令:

gcloud container clusters delete --location CLUSTER_LOCATION otlp-test --project PROJECT_ID

使用其他以 OpenTelemetry 為基礎的收集器

您或許可以使用其他以 OpenTelemetry 為基礎的收集器,將 OTLP 指標傳送至 Telemetry API,但 Google Cloud 不會為非標準的 OpenTelemetry 收集器提供客戶支援服務。

舉例來說,您可以透過 otelcol.auth.google 元件驗證,並按照本文中的指示,以類似標準 OpenTelemetry Collector 的方式設定,使用 Grafana Alloy 1.16.0 以上版本傳送指標。

後續步驟

- 如要瞭解如何搭配使用 OpenTelemetry Collector 和 Telemetry API,以及 OpenTelemetry 零程式碼檢測功能,請參閱「使用 OpenTelemetry 零程式碼檢測功能 (適用於 Java)」。

- 如要瞭解如何從使用 SDK 的應用程式傳送指標,請參閱「使用 SDK 從應用程式傳送指標」。

- 如要瞭解如何從其他匯出工具遷移至

otlphttp匯出工具,請參閱「遷移至 OTLP 匯出工具」。 - 如要進一步瞭解 Telemetry API,請參閱「Telemetry (OTLP) API 總覽」。