本文档介绍了如何部署 OpenTelemetry 收集器、如何配置该收集器以使用 otlphttp 导出器和 Telemetry (OTLP) API,以及如何运行遥测生成器以将指标写入 Cloud Monitoring。然后,您可以在 Cloud Monitoring 中查看这些指标。

如果您使用的是 Google Kubernetes Engine,则可以按照适用于 GKE 的受管 OpenTelemetry 中的说明操作,而无需手动部署和配置使用 Telemetry API 的 OpenTelemetry 收集器。

如果您使用 SDK 将指标从应用直接发送到 Telemetry API,请参阅使用 SDK 从应用发送指标,了解更多信息和示例。

您还可以将 OpenTelemetry 收集器和 Telemetry API 与 OpenTelemetry 零代码插桩结合使用。如需了解详情,请参阅使用 OpenTelemetry 零代码插桩功能检测 Java 应用。

准备工作

本部分介绍了如何设置环境以部署和使用收集器。

选择或创建 Google Cloud 项目

为此演练选择一个 Google Cloud 项目。如果您还没有 Google Cloud 项目,请进行创建:

- 登录您的 Google Cloud 账号。如果您是 Google Cloud新手,请 创建一个账号来评估我们的产品在实际场景中的表现。新客户还可获享 $300 赠金,用于运行、测试和部署工作负载。

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator role

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Verify that billing is enabled for your Google Cloud project.

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator role

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Verify that billing is enabled for your Google Cloud project.

安装命令行工具

本文档使用以下命令行工具:

gcloudkubectl

gcloud 和 kubectl 工具是 Google Cloud CLI 的一部分。如需了解如何安装这些工具,请参阅管理 Google Cloud CLI 组件。如需查看已安装的 gcloud CLI 组件,请运行以下命令:

gcloud components list

如需配置 gcloud CLI 以供使用,请运行以下命令:

gcloud auth login gcloud config set project PROJECT_ID

启用 API

在Google Cloud 项目中启用 Cloud Monitoring API 和 Telemetry API。请特别注意 Telemetry API (telemetry.googleapis.com);您可能还是第一次遇到此 API。

运行以下命令以启用 API:

gcloud services enable monitoring.googleapis.com gcloud services enable telemetry.googleapis.com

创建集群

创建 GKE 集群。

运行以下命令,创建名为

otlp-test的 Google Kubernetes Engine 集群:gcloud container clusters create-auto --location CLUSTER_LOCATION otlp-test --project PROJECT_ID创建集群后,运行以下命令以连接到该集群:

gcloud container clusters get-credentials otlp-test --region CLUSTER_LOCATION --project PROJECT_ID

授权 Kubernetes 服务账号

以下命令会向 Kubernetes 服务账号授予必要的 Identity and Access Management (IAM) 角色。这些命令假设您使用的是适用于 GKE 的工作负载身份联合:

export PROJECT_NUMBER=$(gcloud projects describe PROJECT_ID --format="value(projectNumber)")

gcloud projects add-iam-policy-binding projects/PROJECT_ID \

--role=roles/logging.logWriter \

--member=principal://iam.googleapis.com/projects/$PROJECT_NUMBER/locations/global/workloadIdentityPools/PROJECT_ID.svc.id.goog/subject/ns/opentelemetry/sa/opentelemetry-collector \

--condition=None

gcloud projects add-iam-policy-binding projects/PROJECT_ID \

--role=roles/monitoring.metricWriter \

--member=principal://iam.googleapis.com/projects/$PROJECT_NUMBER/locations/global/workloadIdentityPools/PROJECT_ID.svc.id.goog/subject/ns/opentelemetry/sa/opentelemetry-collector \

--condition=None

gcloud projects add-iam-policy-binding projects/PROJECT_ID \

--role=roles/telemetry.tracesWriter \

--member=principal://iam.googleapis.com/projects/$PROJECT_NUMBER/locations/global/workloadIdentityPools/PROJECT_ID.svc.id.goog/subject/ns/opentelemetry/sa/opentelemetry-collector \

--condition=None

如果您的服务账号采用其他格式,则可以使用 Google Cloud Managed Service for Prometheus 文档中的命令来授权服务账号,但需进行以下更改:

- 将服务账号名称

gmp-test-sa替换为您的服务账号。 - 授予上一组命令中显示的角色,而不仅仅是

roles/monitoring.metricWriter角色。

部署 OpenTelemetry 收集器

创建收集器配置:复制以下 YAML 文件并将其放在名为 collector.yaml 的文件中。您还可以在 GitHub 的 otlp-k8s-ingest 代码库中找到以下配置。

在您的副本中,请务必将 ${GOOGLE_CLOUD_PROJECT} 替换为您的项目 ID PROJECT_ID。

只有在使用 OpenTelemetry 收集器版本 0.140.0 或更高版本时,OTLP for Prometheus 指标才能正常运行。

配置已部署的 OpenTelemetry 收集器

通过创建 Kubernetes 资源来配置收集器部署。

运行以下命令,创建

opentelemetry命名空间并在该命名空间中创建收集器配置:kubectl create namespace opentelemetry kubectl create configmap collector-config -n opentelemetry --from-file=collector.yaml运行以下命令,使用 Kubernetes 资源配置收集器:

kubectl apply -f https://raw.githubusercontent.com/GoogleCloudPlatform/otlp-k8s-ingest/refs/heads/otlpmetric/k8s/base/2_rbac.yaml kubectl apply -f https://raw.githubusercontent.com/GoogleCloudPlatform/otlp-k8s-ingest/refs/heads/otlpmetric/k8s/base/3_service.yaml kubectl apply -f https://raw.githubusercontent.com/GoogleCloudPlatform/otlp-k8s-ingest/refs/heads/otlpmetric/k8s/base/4_deployment.yaml kubectl apply -f https://raw.githubusercontent.com/GoogleCloudPlatform/otlp-k8s-ingest/refs/heads/otlpmetric/k8s/base/5_hpa.yaml等待收集器 pod 达到“正在运行”状态,并有 1/1 个容器准备就绪。如果这是部署的第一个工作负载,则在 Autopilot 上大约需要三分钟。如需检查 pod,请使用以下命令:

kubectl get po -n opentelemetry -w如需停止监控 pod 状态,请输入 Ctrl-C 以停止该命令。

您还可以检查收集器日志,确保没有明显的错误:

kubectl logs -n opentelemetry deployment/opentelemetry-collector

部署遥测生成器

您可以使用开源 telemetrygen 工具来测试配置。此应用会生成遥测数据并将其发送到收集器。

如需在

opentelemetry-demo命名空间中部署telemetrygen应用,请运行以下命令:kubectl apply -f https://raw.githubusercontent.com/GoogleCloudPlatform/otlp-k8s-ingest/refs/heads/main/sample/app.yaml创建部署后,可能需要一段时间才能创建 pod 并开始运行。如需检查 pod 的状态,请运行以下命令:

kubectl get po -n opentelemetry-demo -w如需停止监控 pod 状态,请输入 Ctrl-C 以停止该命令。

使用 Metrics Explorer 查询指标

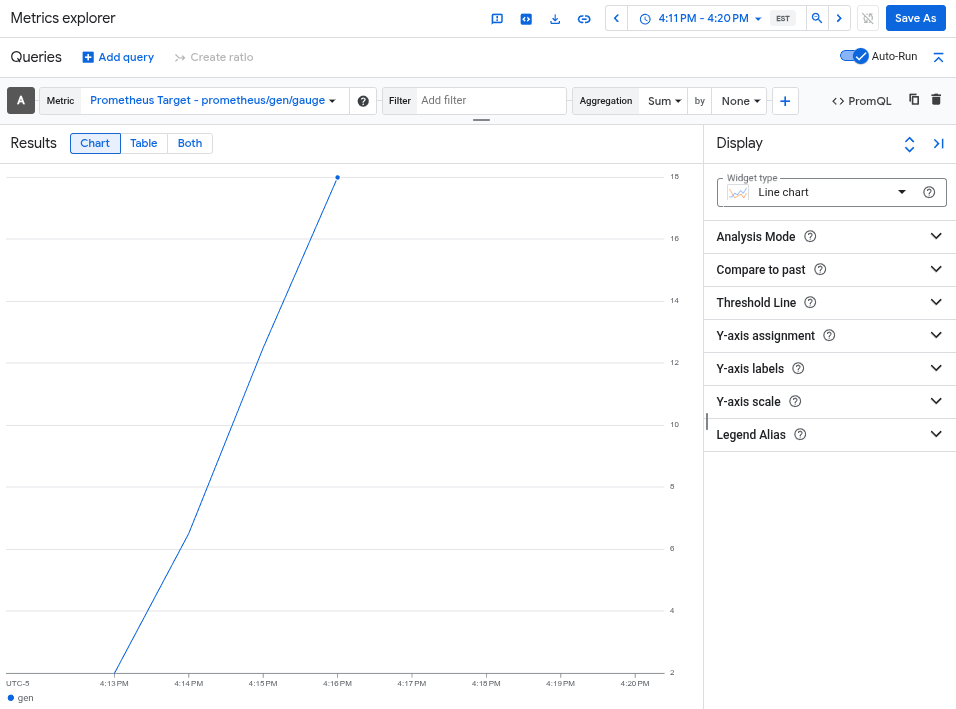

telemetrygen 工具会写入名为 gen 的指标。您可以在 Metrics Explorer 中通过查询构建器界面和 PromQL 查询编辑器查询此指标。

在 Google Cloud 控制台中,前往 leaderboard Metrics Explorer 页面:

如果您使用搜索栏查找此页面,请选择子标题为监控的结果。

- 如果您使用 Metrics Explorer 查询构建器界面,则指标的全称为

prometheus.googleapis.com/gen/gauge。 - 如果您使用 PromQL 查询编辑器,则可以使用名称

gen查询该指标。

下图显示了 Metrics Explorer 中的 gen 指标图表:

删除集群

通过查询指标验证部署后,您可以删除集群。如需删除集群,请运行以下命令:

gcloud container clusters delete --location CLUSTER_LOCATION otlp-test --project PROJECT_ID

使用其他基于 OpenTelemetry 的收集器

您或许可以使用其他基于 OpenTelemetry 的收集器将 OTLP 指标发送到 Telemetry API,但 Google Cloud 不会为非标准的基于 OpenTelemetry 的收集器提供客户支持。

例如,您可以使用 Grafana Alloy 1.16.0 版或更高版本发送指标,方法是使用 otelcol.auth.google 组件进行身份验证,并按照本文档中的说明将其配置为与标准 OpenTelemetry 收集器类似。

后续步骤

- 如需了解如何将 OpenTelemetry 收集器和 Telemetry API 与 OpenTelemetry 零代码插桩搭配使用,请参阅将 OpenTelemetry 零代码插桩用于 Java。

- 如需了解如何从使用 SDK 的应用发送指标,请参阅使用 SDK 从应用发送指标。

- 如需了解如何从其他导出器迁移到

otlphttp导出器,请参阅迁移到 OTLP 导出器。 - 如需详细了解 Telemetry API,请参阅 Telemetry (OTLP) API 概览。