Insights sur les performances des ventes

Cet exemple de solution montre comment accélérer les insights sur l'état des performances commerciales à l'aide de données provenant de SAP ERP ou SAP BDC.

Il vous permet de :

- Optimisez vos revenus par produit, région et organisation commerciale.

- Suivez les performances sur les canaux de distribution et les divisions.

- Utilisez l'IA pour identifier les goulots d'étranglement ou les tendances de livraison à temps qui ont un impact sur les revenus et les coûts.

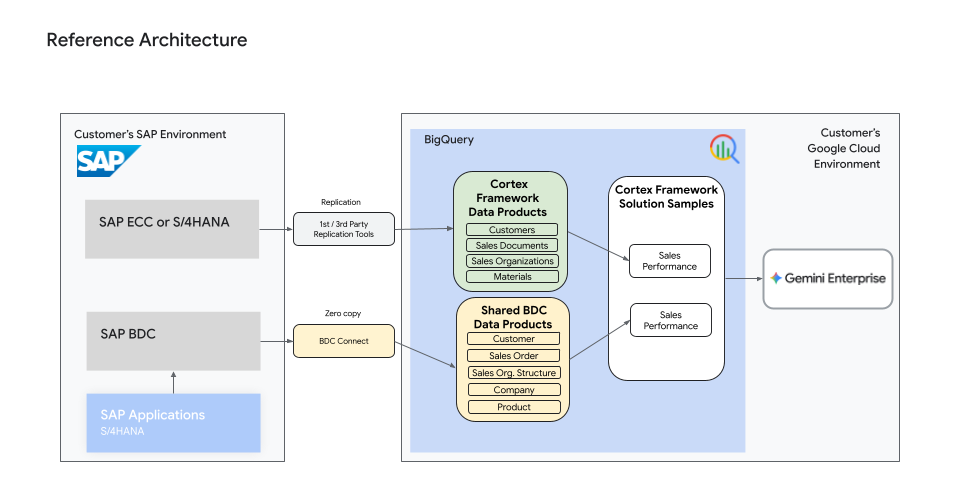

Architecture de référence

Une architecture de référence de haut niveau fournit une vue d'ensemble des produits de données spécifiques à la source requis pour alimenter ce cas d'utilisation.

Produits de données requis

Si vous créez des produits de données à partir de données SAP ERP, les produits de données Cortex Framework suivants sont requis :

- Clients

- Documents commerciaux

- Organisations commerciales

- Matériaux

De plus, les clients peuvent également combiner de manière fluide d'autres tables de base SAP ERP, par exemple KNVV, pour améliorer les insights, comme indiqué dans les extraits de code.

Si vous créez des produits de données à partir de données SAP BDC, les produits de données BDC suivants sont requis :

- Client

- Commande client

- Structure de l'équipe commerciale

- Entreprise

- Produit

Extraits de code

L'extrait de code suivant montre comment créer un modèle de données BigQuery pour obtenir des insights sur les performances commerciales à partir des produits de données SAP ERP issus de Cortex Framework ou de l'intégration des produits de données SAP BDC.

WITH date_dimension as (

SELECT

dt as date,

CAST(FORMAT_DATE('%Y%m%d', dt) AS INT64) as date_int,

FORMAT_DATE('%Y%m%d', dt) as date_str,

FORMAT_DATE('%Y-%m-%d', dt) as date_str2,

EXTRACT(YEAR FROM dt) as cal_year,

IF(EXTRACT(QUARTER FROM dt) IN (1, 2), 1, 2) as cal_semester,

EXTRACT(QUARTER FROM dt) as cal_quarter,

EXTRACT(MONTH FROM dt) as cal_month,

EXTRACT(WEEK FROM dt) as cal_week,

CAST(EXTRACT(YEAR FROM dt) AS STRING) as cal_year_str,

IF(EXTRACT(QUARTER FROM dt) IN (1, 2), '01', '02') as cal_semester_str,

IF(EXTRACT(QUARTER FROM dt) IN (1, 2), 'S1', 'S2') as cal_semester_str2,

'0' || EXTRACT(QUARTER FROM dt) as cal_quarter_str,

'Q' || EXTRACT(QUARTER FROM dt) as cal_quarter_str2,

FORMAT_DATE('%B', dt) as cal_month_long_str,

FORMAT_DATE('%b', dt) as cal_month_short_str,

'0' || (EXTRACT(WEEK FROM dt)) as cal_week_str,

FORMAT_DATE('%A', dt) as day_name_long,

FORMAT_DATE('%a', dt) as day_name_short,

EXTRACT(DAYOFWEEK FROM dt) as day_of_week,

EXTRACT(DAY FROM dt) as day_of_month,

DATE_DIFF(dt, DATE_TRUNC(dt, QUARTER), DAY) + 1 as day_of_quarter,

IF(

EXTRACT(QUARTER FROM dt) IN (1, 2),

EXTRACT(DAYOFYEAR FROM dt),

IF(

EXTRACT(QUARTER FROM dt) = 3,

EXTRACT(DAYOFYEAR FROM dt) - EXTRACT(DAYOFYEAR FROM (DATE_TRUNC(dt, QUARTER) - 1)),

EXTRACT(DAYOFYEAR FROM dt) - EXTRACT(DAYOFYEAR FROM (DATE_TRUNC(DATE_SUB(dt, INTERVAL 3 MONTH), QUARTER)))

)

) as day_of_semester,

EXTRACT(DAYOFYEAR FROM dt) as day_of_year,

IF(

EXTRACT(QUARTER FROM dt) IN (1, 2),

EXTRACT(YEAR FROM dt) || 'S1',

EXTRACT(YEAR FROM dt) || 'S2'

) as year_semester,

EXTRACT(YEAR FROM dt) || 'Q' || EXTRACT(QUARTER FROM dt) as year_quarter,

CAST(FORMAT_DATE('%Y%m', dt) AS STRING) as year_month,

EXTRACT(YEAR FROM dt) || ' ' || FORMAT_DATE('%b', dt) as year_month2,

FORMAT_DATE('%Y%U', dt) as year_week,

(DATE_TRUNC(dt, YEAR) = dt) as is_first_day_of_year,

(LAST_DAY(dt, YEAR) = dt) as is_last_day_of_year,

(EXTRACT(MONTH FROM dt) IN (1, 7) AND EXTRACT(DAY FROM dt) = 1) as is_first_day_of_semester,

((EXTRACT(MONTH FROM dt) IN (6) AND EXTRACT(DAY FROM dt) IN (30))

OR (EXTRACT(MONTH FROM dt) IN (12) AND EXTRACT(DAY FROM dt) IN (31))) as is_last_day_of_semester,

(DATE_TRUNC(dt, QUARTER) = dt) as is_first_day_of_quarter,

(LAST_DAY(dt, QUARTER) = dt) as is_last_day_of_quarter,

(DATE_TRUNC(dt, MONTH) = dt) as is_first_day_of_month,

(LAST_DAY(dt, MONTH) = dt) as is_last_day_of_month,

(DATE_TRUNC(dt, WEEK) = dt) as is_first_day_of_week,

(LAST_DAY(dt, WEEK) = dt) as is_last_day_of_week,

((MOD(EXTRACT(YEAR FROM dt), 4) = 0 AND MOD(EXTRACT(YEAR FROM dt), 100) != 0)

OR MOD(EXTRACT(YEAR FROM dt), 400) = 0) as is_leap_year,

(FORMAT_DATE('%A', dt) NOT IN ('Saturday', 'Sunday')) as is_week_day,

(FORMAT_DATE('%A', dt) IN ('Saturday', 'Sunday')) as is_week_end,

(DATE_TRUNC(dt, WEEK)) as week_start_date,

(LAST_DAY(dt, WEEK)) as week_end_date,

(DATE_TRUNC(dt, MONTH)) as month_start_date,

(LAST_DAY(dt, MONTH)) as month_end_date,

(EXTRACT(WEEK FROM LAST_DAY(dt, ISOYEAR)) = 53) as has_53_weeks

FROM UNNEST(GENERATE_DATE_ARRAY(

DATE_SUB(

DATE_TRUNC(CURRENT_DATE(), YEAR), INTERVAL 20 YEAR),

LAST_DAY(DATE_ADD(CURRENT_DATE(), INTERVAL 20 YEAR)),

INTERVAL 1 DAY)

) as dt

),

delivered_qty AS (

SELECT

client_mandt,

internal_reference_document_number_vgbel AS sales_document_id,

internal_reference_document_item_vgpos AS item_id,

SUM(actual_quantity_delivered_in_sales_units_lfimg) AS total_delivered_qty

FROM <YOUR_PROJECT_ID>.<YOUR_CORTEX_DATA_PRODUCTS_DATASET>.delivery_document_items

GROUP BY client_mandt, internal_reference_document_number_vgbel, internal_reference_document_item_vgpos

)

SELECT

header.document_number_vbeln AS sales_document_id,

item.item_number_posnr AS item_id,

header.sales_organization_vkorg AS sales_organization_id,

salesorg.name_vtext AS sales_organization_name,

header.division_spart AS division_id,

division.name_vtext AS division_name,

header.sold_to_party_kunnr AS customer_id,

customer.name1_name1 AS customer_name,

item.material_number_matnr AS product_id,

product.material_text_maktx AS product_name,

item.net_value_of_the_sales_document_item_in_document_currency_netwr AS net_value,

header.document_currency_waerk AS document_currency,

header.requested_delivery_date_vdatu AS requested_delivery_date,

header.delivery_block_lifsk AS delivery_block_reason,

-- Using vbap.gbsta for overall status in S4

foundation_item.gbsta AS overall_status,

-- Deriving delivery status based on delivered quantity

CASE

WHEN delivered_qty.total_delivered_qty IS NULL OR delivered_qty.total_delivered_qty = 0 THEN 'A'

WHEN delivered_qty.total_delivered_qty < item.cumulative_order_quantity_kwmeng THEN 'B'

ELSE 'C'

END AS delivery_status,

salesarea.bzirk AS sales_region,

-- Date Dimensions for Document Date

dimensional_document_date.cal_year AS year_of_sales_document,

dimensional_document_date.cal_month AS month_of_sales_document,

dimensional_document_date.cal_quarter AS quarter_of_sales_document,

-- Date Dimensions for Requested Delivery Date

dimensional_delivery_date.cal_year AS year_of_requested_delivery,

dimensional_delivery_date.cal_month AS month_of_requested_delivery,

dimensional_delivery_date.cal_quarter AS quarter_of_requested_delivery,

-- Calculated fields

CASE

WHEN CURRENT_DATE() > header.requested_delivery_date_vdatu

AND (delivered_qty.total_delivered_qty IS NULL

OR delivered_qty.total_delivered_qty < item.cumulative_order_quantity_kwmeng)

THEN TRUE

ELSE FALSE

END AS is_delivery_overdue

FROM <YOUR_PROJECT_ID>.<YOUR_CORTEX_DATA_PRODUCTS_DATASET>.sales_document_headers AS header

JOIN <YOUR_PROJECT_ID>.<YOUR_CORTEX_DATA_PRODUCTS_DATASET>.sales_document_items AS item

ON header.client_mandt = item.client_mandt

AND header.document_number_vbeln = item.document_number_vbeln

-- Joins with Master Data Products for Text

LEFT JOIN <YOUR_PROJECT_ID>.<YOUR_CORTEX_DATA_PRODUCTS_DATASET>.customers_md AS customer

ON header.client_mandt = customer.client_mandt

AND header.sold_to_party_kunnr = customer.customer_number_kunnr

LEFT JOIN <YOUR_PROJECT_ID>.<YOUR_CORTEX_DATA_PRODUCTS_DATASET>.materials_md AS product

ON item.client_mandt = product.client_mandt

AND item.material_number_matnr = product.material_number_matnr

LEFT JOIN <YOUR_PROJECT_ID>.<YOUR_CORTEX_DATA_PRODUCTS_DATASET>.sales_organizations_md AS salesorg

ON header.client_mandt = salesorg.client_mandt

AND header.sales_organization_vkorg = salesorg.sales_organization_vkorg

LEFT JOIN <YOUR_PROJECT_ID>.<YOUR_CORTEX_DATA_PRODUCTS_DATASET>.divisions_md AS division

ON header.client_mandt = division.client_mandt

AND header.division_spart = division.division_spart

-- Join with KNVV for Sales Org Region

LEFT JOIN <YOUR_PROJECT_ID>.<YOUR_CORTEX_DATA_FOUNDATION_DATASET>.knvv AS salesarea

ON header.client_mandt = salesarea.mandt

AND header.sold_to_party_kunnr = salesarea.kunnr

AND header.sales_organization_vkorg = salesarea.vkorg

AND header.distribution_channel_vtweg = salesarea.vtweg

AND header.division_spart = salesarea.spart

-- Join with vbap directly to get gbsta (Overall Status)

LEFT JOIN <YOUR_PROJECT_ID>.<YOUR_CORTEX_DATA_FOUNDATION_DATASET>.vbap AS foundation_item

ON item.client_mandt = foundation_item.mandt

AND item.document_number_vbeln = foundation_item.vbeln

AND item.item_number_posnr = foundation_item.posnr

-- Join with delivered_qty CTE

LEFT JOIN delivered_qty AS delivered_qty

ON item.client_mandt = delivered_qty.client_mandt

AND item.document_number_vbeln = delivered_qty.sales_document_id

AND item.item_number_posnr = delivered_qty.item_id

-- Joins for Date Dimensions

LEFT JOIN date_dimension AS dimensional_document_date

ON header.document_date_audat = dimensional_document_date.date

LEFT JOIN date_dimension AS dimensional_delivery_date

ON header.requested_delivery_date_vdatu = dimensional_delivery_date.date

Activer les agents d'IA

Après avoir créé votre modèle de données Insights sur les performances commerciales dans BigQuery, vous pouvez créer un agent de données personnalisé. Cela vous permet d'interroger directement les tendances des ventes et les métriques de performances en langage naturel, sans avoir besoin d'utiliser un langage SQL complexe.