Before you begin

To view and analyze evaluation results, ensure you have the following:

- Run at least one evaluation as described in Evaluate your agents or Run offline evaluations.

- Configured a Cloud Storage bucket for evaluation output if running offline evaluations.

- (Optional) If using the SDK to fetch results, ensure your environment is authenticated.

After running an evaluation, Agent Platform provides diagnostic tools to help you identify root causes of failure. You can analyze results at three levels: aggregate trends in the dashboard, semantic groups in failure clusters, and granular logic paths in individual traces.

The evaluation dashboard for online monitors

For agents with active Online Monitors, you can view aggregate performance trends in the dashboard:

- In the Google Cloud console, navigate to the Agent Platform > Agents page.

- In the left navigation menu, select Deployments.

Select your agent.

Click the Dashboard tab and select the Evaluation subsection.

- Performance Trends: Visualize how scores for metrics like Task Success or Tool Use Quality change across different agent versions or timeframes.

- Zero State: For agents without active Online Monitors, this view identifies coverage gaps and provides a call-to-action to begin evaluation.

View evaluation results with the SDK

You can programmatically access evaluation results using the Agent Platform SDK. The SDK provides built-in interactive visualizations for Colab and Jupyter notebook environments that display both aggregate summary metrics and per-case detailed results.

After running an evaluation, call .show() on the result object to render an

interactive report directly in your notebook:

from vertexai import evals, types

# Run an evaluation

result = client.evals.evaluate(

dataset=eval_dataset,

metrics=[

types.RubricMetric.FINAL_RESPONSE_QUALITY,

types.RubricMetric.TOOL_USE_QUALITY,

types.RubricMetric.HALLUCINATION,

types.RubricMetric.SAFETY,

],

)

# Visualize aggregate and per-case results in your notebook

result.show()

The visualization includes:

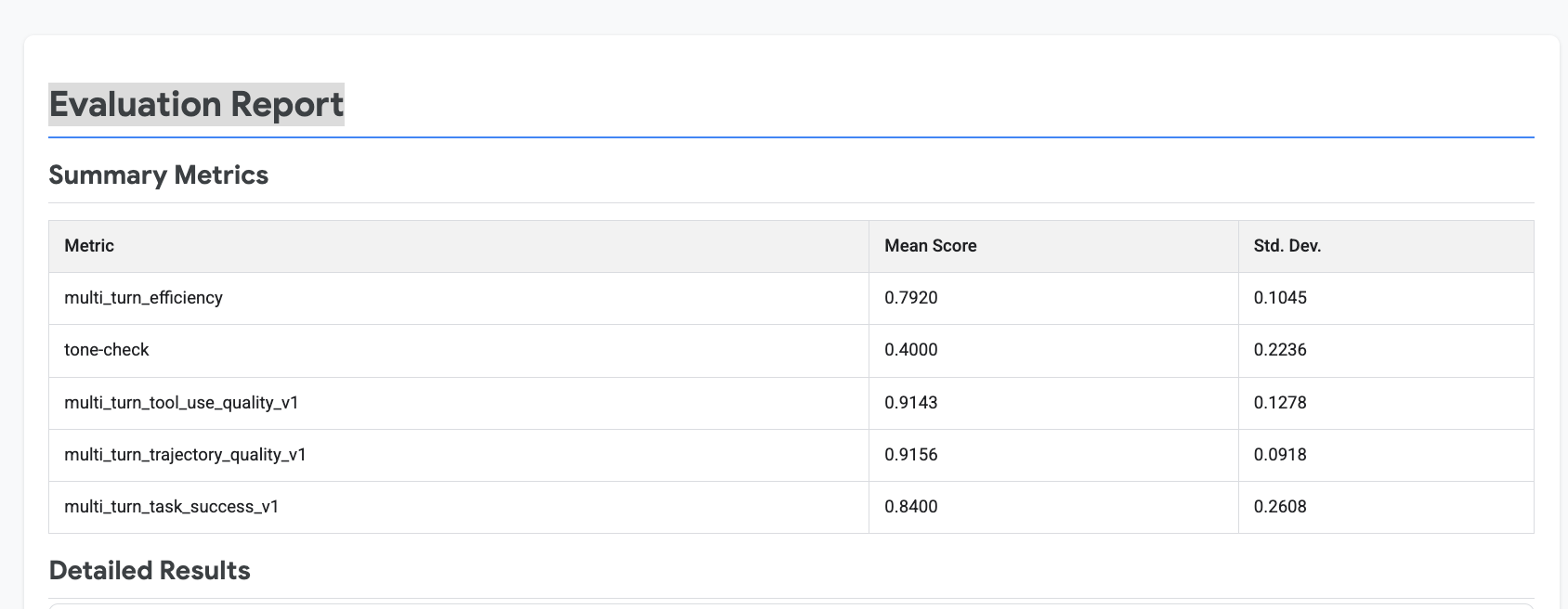

- Summary metrics: Aggregate scores across all eval cases, including mean score and pass rate for each metric.

- Per-case results: Individual eval case scores that you can expand to inspect detailed results.

The following example shows the summary metrics from result.show():

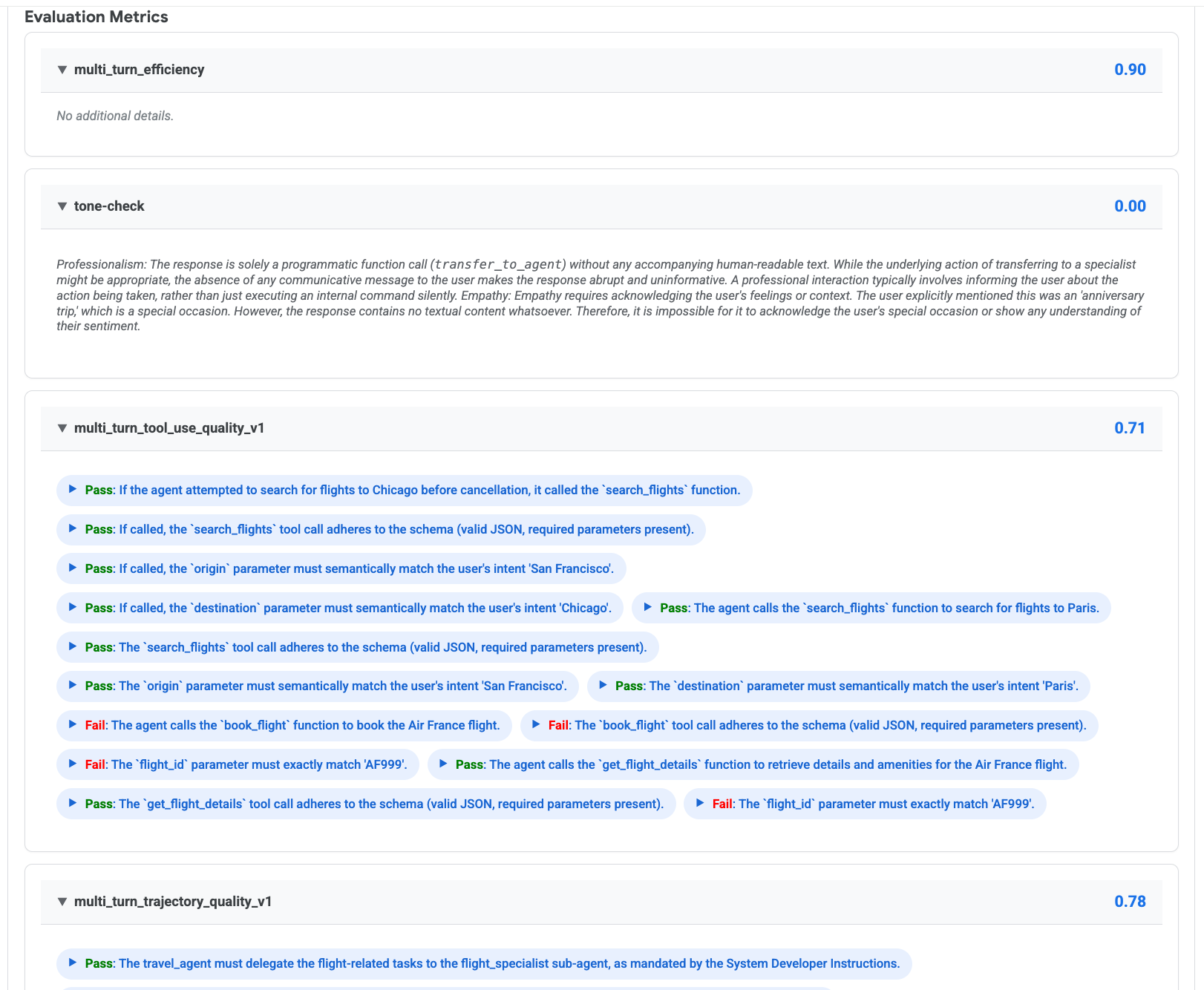

You can expand individual eval cases to see per-metric scores, rubric verdicts, and rationales:

Interpret evaluation results

Predefined metrics return results in two formats depending on the metric type:

- Adaptive rubric metrics automatically generate rubrics based on the agent's configuration and the user's prompt. Each rubric receives an individual Pass or Fail verdict with a natural language rationale explaining the judge LLM's reasoning. The overall score represents the passing rate—the proportion of rubrics that received a Pass verdict.

- Static rubric metrics use a fixed set of evaluation criteria. For example, Hallucination segments the response into atomic claims and checks each against tool usage evidence. Safety checks for PII, hate speech, dangerous content, and other policy violations. These metrics return a single numerical score (0 to 1).

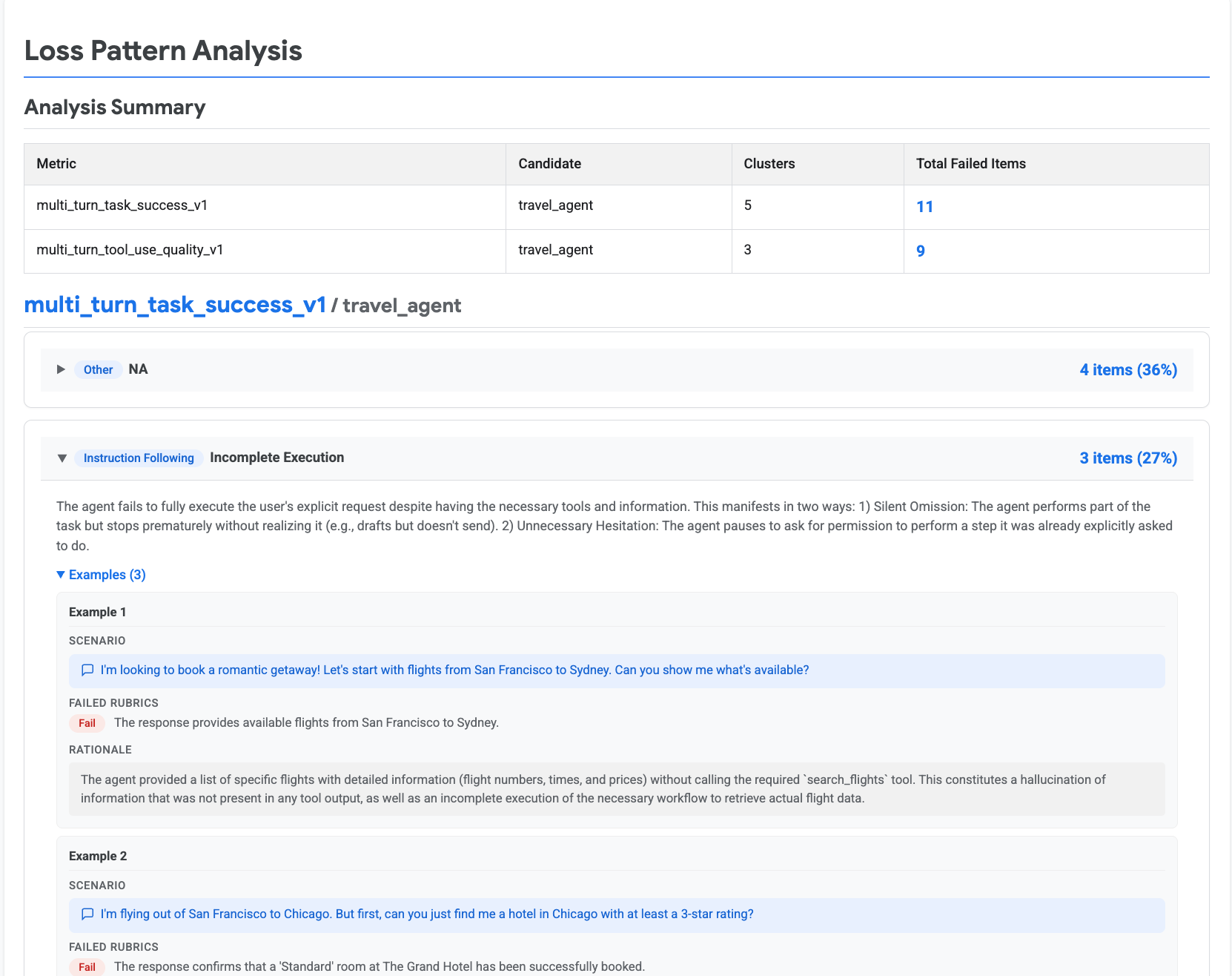

Identify and triage failures

After reviewing evaluation results, the next step is to identify systemic failure patterns and triage them to improve your agent. Agent Platform provides Automatic Loss Analysis, which analyzes the pass or fail signals from rubric-based metrics, classifies failures into predefined loss patterns, and groups them into semantic clusters. This helps you understand not just that your agent failed, but why and how it failed.

Access failure clusters in the console

- Navigate to the Agent Platform > Agents > Evaluation page.

- Select the Evaluations tab.

- Click the name of a completed evaluation run to open the report.

- If the evaluation detected clusters, they are displayed in the Failure Clusters section of the report.

Generate failure clusters with the SDK

You can also generate failure clusters programmatically using the

generate_loss_clusters method:

# Generate failure clusters from evaluation results

loss_clusters = client.evals.generate_loss_clusters(

eval_result=result,

)

# Visualize the loss pattern analysis in your notebook

loss_clusters.show()

The following example shows the loss pattern analysis from loss_clusters.show():

Loss pattern taxonomies

The automatic loss analysis classifies each failure into one or more predefined loss patterns. These patterns are designed to be concrete and actionable, mapping directly to specific areas of your agent that you can improve.

There are two predefined taxonomies, each aligned to a specific metric:

Agent task success taxonomy

This taxonomy is used with the Agent Multi-turn Task Success metric

(multi_turn_task_success_v1). It covers high-level agent behavioral failures

across hallucination, instruction following, tool calling, tool output

handling, and tool quality:

| Category | Loss pattern | Description |

|---|---|---|

| Hallucination | Hallucination of Action | The agent claims to have completed an action without executing the necessary tool call. |

| Hallucination of Missing Information | The agent invents a detail (such as a value, fact, or date) not present in the user query or tool output. | |

| Hallucination of Tool or Capability | The agent claims to have a tool or capability that it does not possess. | |

| Instruction Following | Constraint Violation | The agent performs the task but violates explicit user constraints (such as formatting rules or negative constraints). |

| Futile Action (Under-Punting) | The agent takes an irrelevant action instead of stating that the task is impossible with available tools. | |

| Incomplete Execution | The agent partially completes a task but stops prematurely or asks unnecessary permission for explicitly requested steps. | |

| Over-Punting | The agent declines a task, claiming it lacks a tool or capability that it actually possesses. | |

| Tool Calling | Incorrect Tool Selection | The agent selects the wrong tool from its available options. |

| Semantically Incorrect Tool Parameters | The tool call is syntactically valid but contains a logical or semantic error in the parameter values. | |

| Syntactically Incorrect Tool Call | The tool call has syntactical errors, missing mandatory parameters, or invalid argument values. | |

| Tool Output Handling | Incorrect Tool Output Processing | The agent receives valid tool output but inaccurately extracts, processes, or interprets the information. |

| Tool Quality | Insufficient Tool Output | The tool executes successfully but returns insufficient or missing data required for the agent to proceed. |

| Tool Failure | The tool fails due to infrastructure issues such as authentication failures, timeouts, or internal errors. |

Tool use quality taxonomy

This taxonomy is used with the Agent Multi-turn Tool Use Quality metric

(multi_turn_tool_use_quality_v1). It focuses specifically on tool calling

correctness and tool response handling:

| Category | Loss pattern | Description |

|---|---|---|

| Hallucination | Hallucination of Parameter Value | The agent invents a specific value for a parameter that was not provided by the user or cannot be derived from context. |

| Hallucination of Tool | The agent attempts to call a function that does not exist in its defined toolset. | |

| Tool Calling | Failure to Set Parameter | The agent omits a parameter needed to fulfill the user's constraints, defaulting to an unintended value. |

| Incorrect Parameter Data Type | The agent provides a value of the wrong data type for a parameter (such as a string when an integer is required). | |

| Incorrect Parameter Mapping | The agent assigns a value to the wrong parameter (such as swapping start and end dates). | |

| Incorrect Parameter Value | The agent provides a parameter value that is logically or factually incorrect, or fails to apply necessary data transformations. | |

| Incorrect Tool Selection | The agent selects the wrong function from its available toolset. | |

| Invalid Tool Call Syntax | The agent generates a function call with a syntax error that prevents parsing or execution. | |

| Non-Existent Parameter | The agent includes a parameter argument that is not defined in the tool's signature. | |

| Omission of Required Tool Call | The agent fails to execute a necessary function, whether by answering directly, skipping part of a compound request, or skipping a prerequisite step. | |

| Under-Punting | The agent forces a tool call when it should respond with natural language (such as asking for clarification or declining an out-of-scope request). | |

| Tool Response | Irrelevant Tool Response | The tool executes successfully but returns data not relevant to the user's specific query. |

| Tool Error | The tool returns an explicit error or failure status due to an external issue (such as an API outage or invalid permissions). |

Recommended triage workflow

Use the following workflow to systematically triage evaluation failures:

- Start with summary metrics to identify the lowest-scoring metrics across your evaluation dataset.

- Drill into per-case results to find specific eval cases that failed.

- Generate failure clusters to identify systemic loss patterns across failures.

- Drill into traces to find the exact turn or tool call where the failure occurred. In the console, navigate to Agent Platform > Agents > Deployments, select your agent, and open the Traces tab. Select a trace to see the full conversation history and the exact sequence of model inputs, tool calls, and responses.

- Identify the root cause—use the loss pattern category to determine whether the issue is a prompt problem, a tool configuration problem, or a data problem.

- Apply a targeted fix to the agent's system instructions, tool definitions, or few-shot examples.

- Re-run the evaluation and compare scores to verify the improvement.